|

12/25/2023 0 Comments Summarize bot

These stop words will be added to a set, guaranteeing no duplicates. Remove Common and Stop WordsĪt the top of the script we declare the path of the stop words text files. This is not strictly required to do but helps a lot while debugging the whole process. We split the text by that character, then strip all whitespaces and join it again. When extracting the text from the article we usually get a lot of whitespaces, mostly from line breaks ( \n). If you are using another language please refer to the Requirements section so you know how to install the appropriate model.

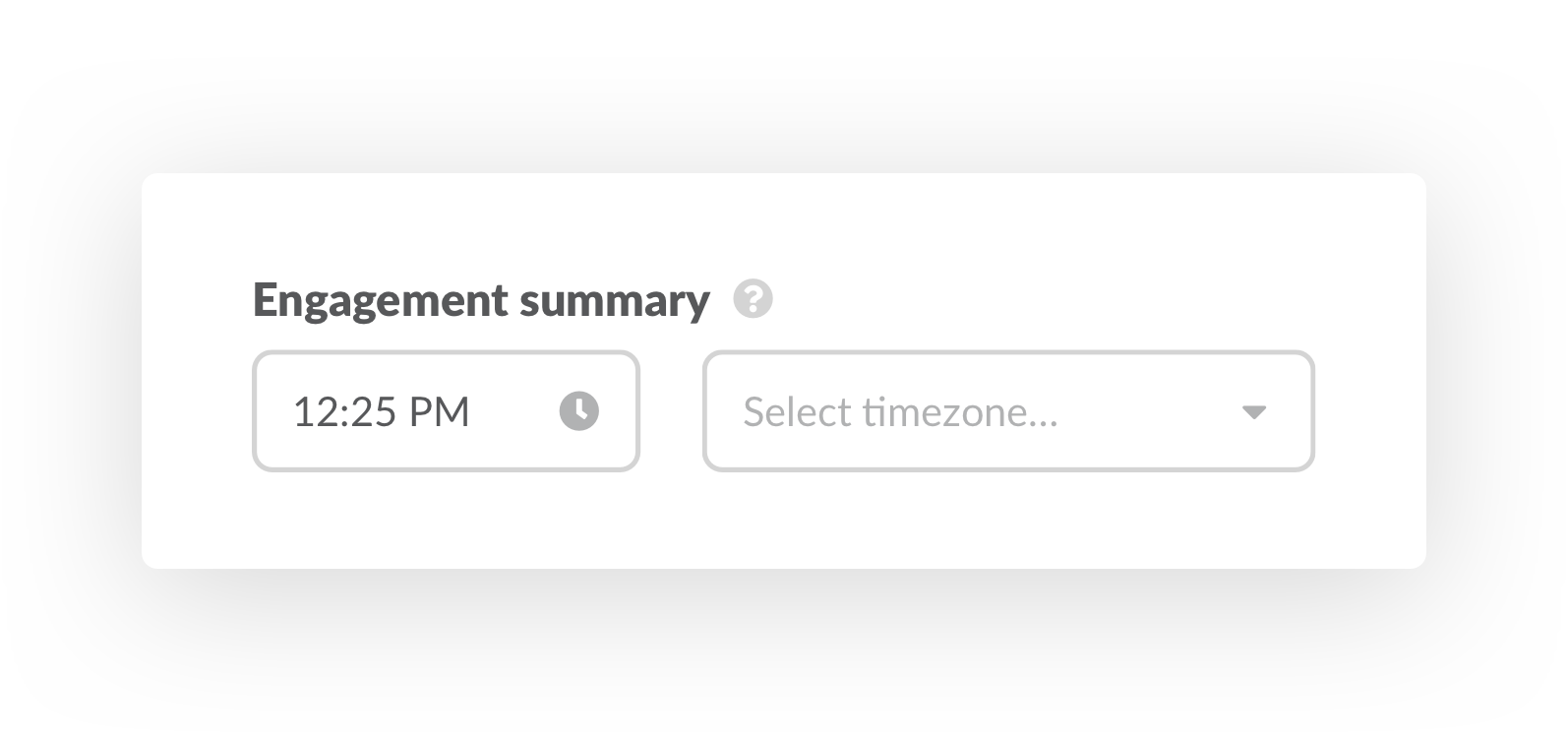

That line of code will load the Spanish model which I use the most. Take the top 5 sentences and top 5 words and return them in chronological order.īefore starting out we need to initialize the spaCy library.Split the original article into sentences and score each sentence using the scores from the words.Split the copied article into words and score each word.Make a copy of the original article and remove all common used words from it.Reformat and clean the original article by removing all whitespaces.This algorithm was designed to work primarily on Spanish written articles. We make a final check and if the article is still too short we abort the process and move to the next url, otherwise we move to the summary algorithm. The scraper was now compatible with all the urls I tested. In some cases I used partial words that share the same letters in English and Spanish (artic -> article/articulo). Using all the previous methods greatly increased the overall accuracy of the scraper. # The article is still too short, let's try one more time. It worked ok for the first websites I tested, but I soon realized that very few websites use it and those who use it don't use it correctly. # If our title is too short we fallback to the first h1 tag. We start the web scraper on the usual way, with the Requests and BeautifulSoup libraries.Īrticle_title = soup. The second best thing to do is to make the scraper as accurate as possible. Creating specialized web scrapers for each one is simply not feasible. Web ScraperĬurrently in the whitelist there are already more than 300 different websites of news articles and blogs. If the post and its url passes both checks then a process of web scraping is applied to the url, this is where things start getting interesting.īefore replying to the original submission it checks the percentage of the reduction achieved, if it's too low or too high it skips it and moves to the next submission. This whitelist is currently curated by myself. It first detects if the submission hasn't already been processed and then checks if the submission url is in the whitelist. The bot polls a subreddit every 10 minutes to get its latest submissions. The bot is simple in nature, it uses the PRAW library which is very straightforward to use. wordcloud : Used to create word clouds with the article text.Īfter installing the spaCy library you must install a language model to be able to tokenize the article.įor other languages please check the following link: Reddit Bot.tldextract : Used to extract the domain from an url.html5lib : This parser got better compatibility when used with BeautifulSoup.BeautifulSoup : Used for extracting the article text.Requests : To perform HTTP get requests to the articles urls.PRAW : Makes the use of the Reddit API very easy.spaCy : Used to tokenize the article into sentences and words.This project uses the following Python libraries It manages a list of already processed submissions to avoid duplicates. Summary.py : A Python script that applies a custom algorithm to a string of text and extracts the top ranked sentences and words.īot.py : A Reddit bot that checks a subreddit for its latest submissions. Scraper.py : A Python script that performs web scraping on a given HTML source, it extracts the article title, date and body. It was fully developed in Python and it is inspired by similar projects seen on Reddit news subreddits that use the term frequency–inverse document frequency ( tf–idf). This project implements a custom algorithm to extract the most important sentences and keywords from Spanish and English news articles.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed